AI Performance With Fiber Networks: Best Expert Guide

Discover how fiber networks boost AI performance through reduced latency, faster data transmission, and optimized real-time analytics for edge computing.

Introduction

Key Takeaways

- Fiber networks dramatically reduce latency in edge AI systems, enabling real-time data processing and decision-making

- Strategic fiber deployment transforms AI performance by supporting faster data transmission and optimized analytics capabilities

- Network infrastructure quality directly impacts the effectiveness of AI models operating at the edge

- Professional experience shows that addressing connectivity bottlenecks unlocks the full potential of edge AI applications

- Understanding the relationship between fiber technology and AI performance is essential for successful implementation

A few years back, I was working with a client who was struggling with latency issues in their edge AI applications. They were utilizing real-time data to drive decision-making processes, but the sluggish response times were a significant bottleneck. This was where the deployment of a robust fiber network became a game-changer.

By facilitating faster data transmission speeds and reducing latency, the performance of their AI systems improved dramatically, allowing them to process information in near real-time. The transformation wasn't just incremental—it fundamentally changed how their business operated. What once took seconds now happened in milliseconds, enabling truly responsive AI-driven decisions.

This experience taught me the critical role that fiber networks play in supporting advanced technologies. The synergy between fiber infrastructure and edge AI systems isn't just about speed; it's about creating a seamless flow of data that optimizes performance. When network connectivity becomes a bottleneck, even the most sophisticated AI models can't deliver their full value.

The Growing Importance of AI Performance at the Edge

As organizations increasingly deploy AI capabilities closer to data sources, the demands on network infrastructure have intensified. Edge AI requires processing vast amounts of information with minimal delay, making the quality of connectivity a make-or-break factor. Traditional network solutions often struggle to meet these requirements, creating performance gaps that limit what's possible.

Fiber optic technology addresses these challenges head-on. By enabling faster data transmission and optimizing real-time analytics capabilities, fiber networks unlock the true potential of edge AI deployments. The difference isn't subtle—it's the distinction between AI systems that respond instantly and those that lag behind the pace of business.

Throughout this guide, we'll explore how fiber networks enhance edge AI performance through reduced latency, increased throughput, and superior reliability. You'll discover practical strategies for deployment, learn about the technical advantages that make fiber indispensable, and understand how to design network infrastructure that maximizes AI capabilities. Whether you're planning your first edge AI implementation or optimizing existing systems, the insights ahead will help you build a foundation for success.

Sources

Discover how fiber networks enhance edge AI performance with reduced latency, faster data transmission, and optimized real-time analytics capabilities.

Focus keyword: AI performanceTone: professional

Table of Contents

- Introduction — Hook readers with Brian's personal story about solving a client's edge AI latency crisis through fiber deployment. Establish the critical connection between fiber infrastructure and AI performance. Preview how fiber networks enable real-time data processing and decision-making at the edge.

- Table of Contents — Auto-generated navigation for the article sections

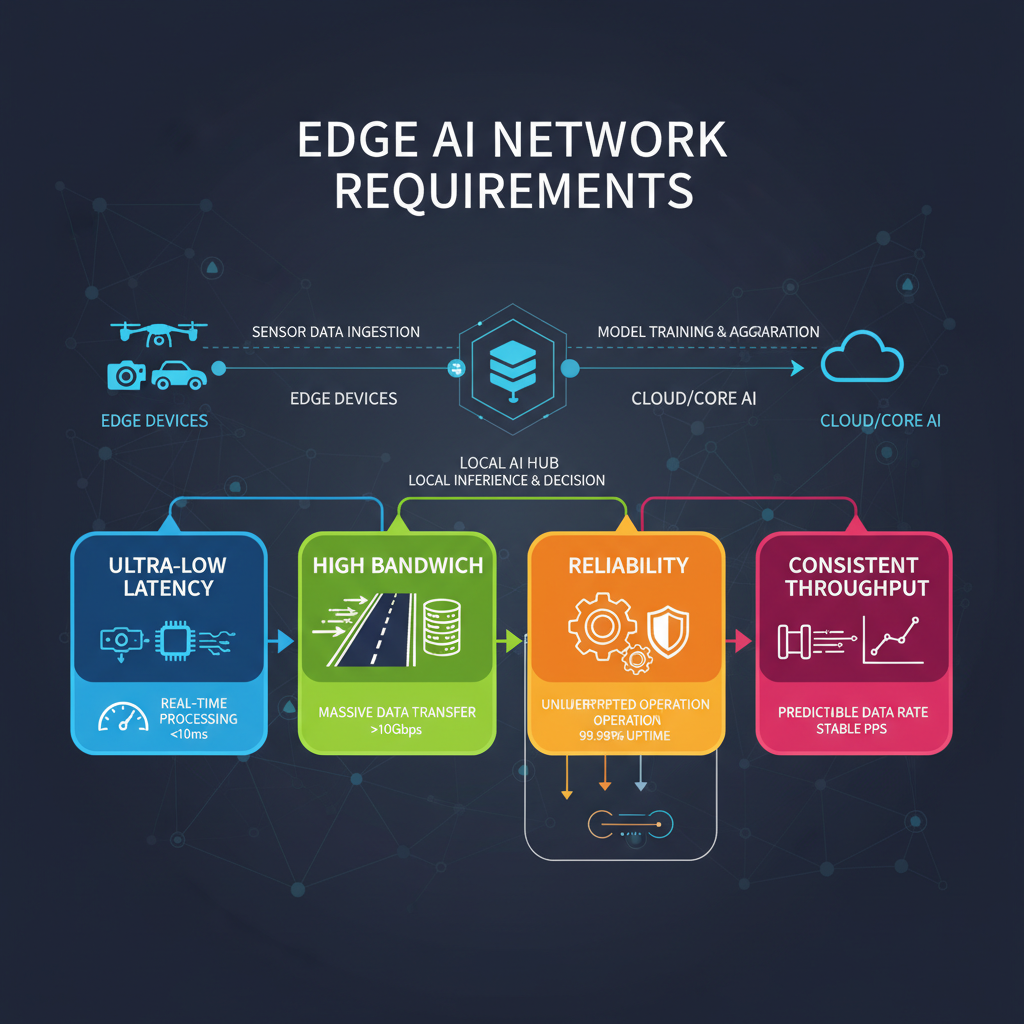

- Understanding Edge AI and Its Network Requirements — Define edge AI and explain why it processes data locally rather than in centralized cloud environments. Detail the specific network demands: ultra-low latency, high bandwidth, reliability, and consistent throughput. Connect these requirements to real-world applications like autonomous systems, industrial IoT, and real-time analytics.

- Why Fiber Optic Technology Outperforms Traditional Connectivity — Compare fiber optics to copper and wireless alternatives. Explain technical advantages: light-speed data transmission, immunity to electromagnetic interference, higher bandwidth capacity, and lower signal degradation over distance. Discuss how these properties directly impact AI model training and inference speeds.

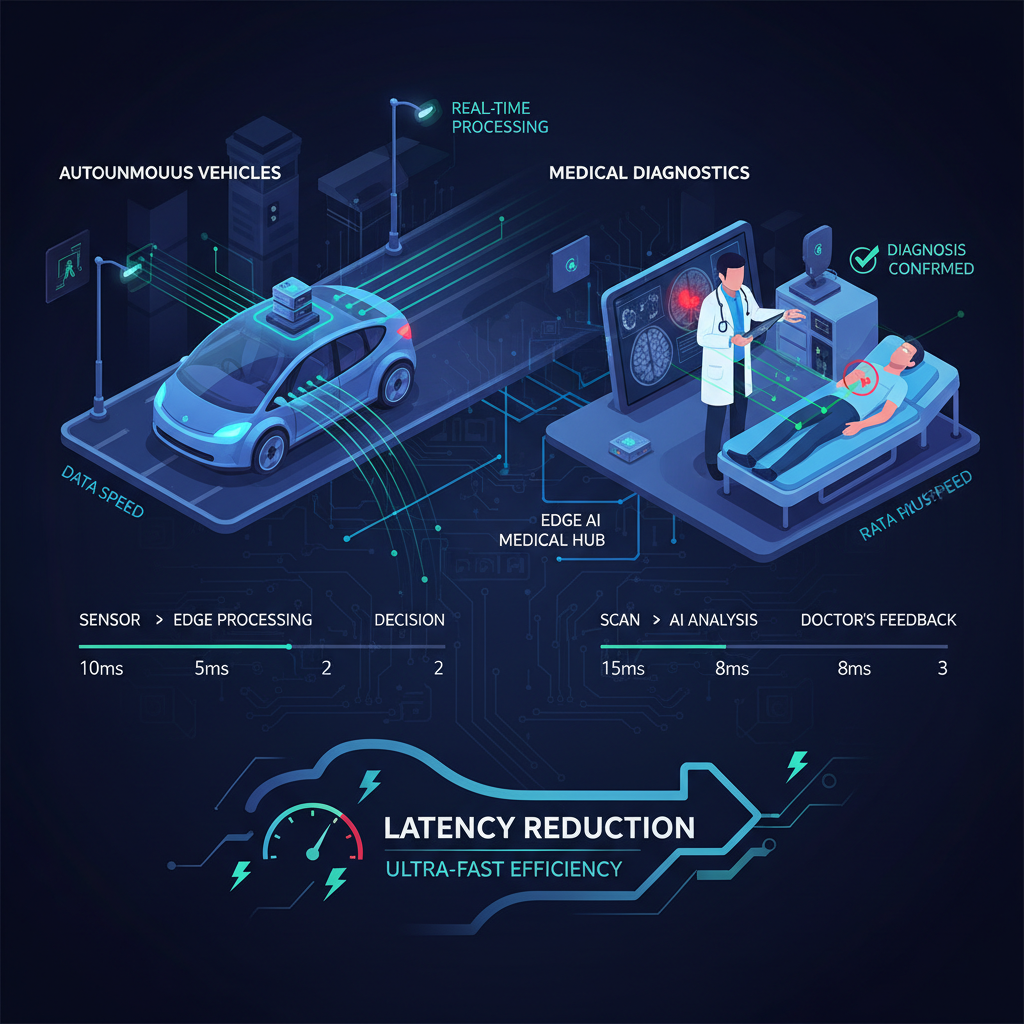

- How Fiber Networks Enhance Edge AI Performance Through Latency Reduction — Dive deep into latency as the critical performance metric for edge AI. Explain how fiber reduces round-trip times to single-digit milliseconds. Provide concrete examples of applications where milliseconds matter: autonomous vehicles, medical diagnostics, financial trading, manufacturing quality control. Include technical context about how AI inference speed depends on data transmission rates.

- Maximizing Data Throughput for AI Model Performance — Explain how fiber's high bandwidth capacity enables simultaneous processing of multiple AI workloads. Discuss the relationship between data volume, model complexity, and network capacity. Cover how fiber supports both upstream (sensor data collection) and downstream (model updates, results distribution) traffic without bottlenecks. Address scalability for growing AI deployments.

- Ensuring Continuous AI Operations with Fiber Reliability — Discuss how fiber's physical properties create more stable connections with fewer interruptions. Explain the impact of network downtime on AI systems, particularly in mission-critical applications. Cover redundancy strategies, failover mechanisms, and how fiber infrastructure supports high-availability architectures for edge AI deployments.

- Connecting Distributed Edge AI Nodes Effectively — Explore how fiber enables distributed AI architectures where multiple edge nodes collaborate. Discuss mesh networks, edge-to-edge communication, and hybrid edge-cloud models. Explain how fiber facilitates model synchronization, federated learning, and load balancing across distributed AI infrastructure. Address coordination requirements for multi-site deployments.

- AI Performance Optimization Through Strategic Network Design — Provide actionable strategies for optimizing fiber networks for AI workloads. Cover quality of service (QoS) configuration, traffic prioritization, network segmentation, and bandwidth allocation. Discuss monitoring tools and performance metrics to track. Include best practices for capacity planning as AI workloads scale. Address security considerations without compromising performance.

- Practical Deployment Considerations for Fiber-Enabled Edge AI — Guide readers through planning and implementation phases. Discuss infrastructure assessment, fiber installation options (dedicated versus shared), colocation strategies, and integration with existing networks. Cover cost considerations, ROI calculations, and timeline expectations. Address common challenges like physical installation constraints and coordination with connectivity providers.

- Conclusion — Reinforce how fiber networks are foundational to edge AI success, not just an upgrade. Summarize the key performance benefits: latency reduction, bandwidth capacity, and reliability. Reference Brian's opening story to show proven results. Encourage readers to assess their current network infrastructure and consider fiber as an essential investment for AI initiatives. End with forward-looking statement about fiber's role in future AI innovation.

11 sections

Understanding Edge AI and Its Network Requirements

Edge AI represents a fundamental shift in how artificial intelligence processes information. Rather than sending data to distant cloud servers for analysis, edge AI runs algorithms and language models locally on hardware positioned close to where data originates. This approach eliminates the dependency on centralized cloud resources for inference, bringing computational power directly to the point of need.

The rationale behind local processing is straightforward: speed and efficiency. When AI systems operate at the edge, they bypass the round-trip journey to remote data centers. For applications requiring split-second decisions—think autonomous vehicles navigating traffic or industrial robots adjusting to production line changes—every millisecond counts. Local processing ensures these systems respond immediately to changing conditions.

Network Demands That Enable Edge AI

Edge AI systems place extraordinary demands on network infrastructure. These requirements go far beyond basic connectivity, shaping how organizations design and deploy their network architectures.

Ultra-low latency stands as the most critical requirement. Edge AI applications often operate in environments where delays measured in milliseconds can mean the difference between success and failure. Real-time analytics platforms processing sensor data need networks that deliver information without perceptible lag.

High bandwidth capacity ensures AI models receive the volume of data they need to function effectively. Edge deployments frequently handle continuous streams from multiple sensors, cameras, and IoT devices simultaneously. The network must support real-time feedback loops and accommodate sudden surges in sensor data or control messages without degradation.

Reliability and consistent throughput form the foundation of dependable AI operations. Edge AI systems can't afford intermittent connectivity or unpredictable performance. Networks must maintain stable connections even under varying load conditions, supporting dynamic bandwidth scaling when applications demand it.

Real-World Applications Driving Network Requirements

The specific network demands of edge AI become clear when examining practical deployments across industries.

Autonomous systems rely on edge AI to process vast amounts of sensor data in real time. These systems must analyze their environment, make decisions, and execute actions within timeframes measured in fractions of a second. Network latency directly impacts their ability to respond to dynamic conditions safely and effectively.

Industrial IoT environments deploy edge AI to optimize manufacturing processes, predict equipment failures, and maintain quality control. Production lines generate continuous data streams from hundreds or thousands of sensors. The network infrastructure must handle this constant flow while supporting the AI models that transform raw data into actionable insights.

Real-time analytics platforms process information as it arrives, enabling immediate business decisions. Whether monitoring supply chains, analyzing customer behavior, or detecting security threats, these systems depend on networks that deliver data consistently and without delay. The edge network must support the computational demands of AI models while maintaining the responsiveness that makes real-time analytics valuable.

These applications share a common thread: they cannot function effectively without network infrastructure designed specifically to meet their performance requirements. The network becomes an integral component of the AI system itself, not merely a conduit for data transmission.

Sources

Why Fiber Optic Technology Outperforms Traditional Connectivity

When it comes to supporting AI workloads at the edge, not all network infrastructure is created equal. Fiber optic technology stands apart from copper and wireless alternatives through fundamental physical advantages that directly translate into superior AI performance.

Light-Speed Data Transmission

Fiber optics transmit data using light pulses through glass or plastic strands, enabling near-instantaneous communication over long distances. Unlike copper cables that rely on electrical signals, fiber's light-based transmission eliminates the speed limitations inherent in metal conductors. This difference becomes critical when AI models need to process and respond to data in real time.

For AI applications requiring rapid inference cycles, the speed advantage of fiber directly impacts how quickly models can receive input data, process it, and return actionable results. The faster the data moves through the network, the faster AI systems can make decisions.

Immunity to Electromagnetic Interference

Copper cables are susceptible to electromagnetic interference from nearby electrical equipment, power lines, and radio frequencies. This interference can corrupt data packets, requiring retransmission and introducing unpredictable delays. Fiber optic cables, transmitting light rather than electricity, remain completely immune to these disruptions.

This reliability is essential for AI systems that depend on consistent, error-free data streams. In industrial environments with heavy machinery or medical facilities with sensitive equipment, fiber ensures AI models receive clean data without the noise that can degrade performance or accuracy.

Higher Bandwidth Capacity

Fiber optic networks offer substantially greater bandwidth capacity compared to traditional copper or wireless connections. A single fiber strand can carry multiple wavelengths of light simultaneously, each transmitting independent data streams at high speeds. This massive capacity supports the data-intensive nature of AI operations.

AI model training requires moving large datasets between storage systems and processing nodes. Inference workloads, while less data-intensive per transaction, often involve thousands of simultaneous requests. Fiber's high-capacity data flow enables these operations to run concurrently without creating bottlenecks that slow down overall AI performance.

Lower Signal Degradation Over Distance

Copper cables experience significant signal degradation over distance, typically requiring repeaters or amplifiers every few hundred feet to maintain signal quality. Wireless connections face similar challenges, with signal strength dropping as distance increases or obstacles interfere with transmission. Fiber optic cables can transmit data for miles with minimal signal loss.

For distributed edge AI deployments spanning multiple locations, this property ensures consistent performance regardless of physical distance between nodes. AI systems at remote edge locations maintain the same high-speed connectivity as those closer to central hubs, enabling uniform performance across the entire network.

Direct Impact on AI Model Performance

These technical advantages combine to create an optimal environment for both AI training and inference operations. Training workloads benefit from fiber's ability to rapidly move massive datasets between storage and compute resources, reducing the time required to iterate through training cycles. Inference workloads gain from ultra-low latency and consistent throughput, enabling real-time responses.

The reliability and speed of fiber networks mean AI models spend less time waiting for data and more time processing it. This efficiency translates directly into faster decision-making, whether the AI is analyzing sensor data from industrial equipment, processing video feeds for security applications, or supporting autonomous systems that require split-second responses.

Sources

How Fiber Networks Enhance Edge AI Performance Through Latency Reduction

When it comes to edge AI, latency isn't just a technical metric—it's the difference between success and failure. Every millisecond counts when AI systems need to make split-second decisions. Fiber networks fundamentally transform this equation by reducing round-trip times to single-digit milliseconds, creating the foundation for truly responsive AI applications.

Traditional network infrastructure struggles with latency that can reach 50-100 milliseconds or higher. Edge computing powered by fiber networks brings that number down dramatically. This reduction enables AI models to process data and deliver insights at speeds that match the pace of real-world events.

Why Latency Matters for AI Inference Speed

AI inference—the process where trained models analyze new data and generate predictions—depends heavily on how quickly data can travel between sensors, processing nodes, and output systems. When data transmission is slow, even the fastest AI algorithms become bottlenecked.

Fiber networks deliver the low-latency, high-capacity performance required for AI data workloads. The light-speed transmission through fiber optic cables means data travels with minimal delay, allowing AI models to receive input and return results in near real-time. This capability transforms what's possible with edge AI deployments.

Real-World Applications Where Milliseconds Matter

Consider autonomous vehicles navigating busy streets. The AI systems must process sensor data, identify obstacles, and adjust steering or braking in fractions of a second. A delay of even 50 milliseconds could mean the difference between a safe maneuver and a collision. Fiber-connected edge nodes enable these vehicles to make decisions with the speed required for safe operation.

In medical diagnostics, AI-powered imaging analysis needs to provide immediate feedback during procedures. Surgeons and radiologists rely on real-time insights to guide critical decisions. When fiber networks reduce latency to single digits, medical AI can deliver diagnostic support that keeps pace with clinical workflows.

Financial trading represents another domain where latency directly impacts outcomes. AI algorithms analyzing market conditions and executing trades operate in a world where milliseconds translate to millions of dollars. Fiber connectivity ensures these systems can react to market changes faster than competitors using slower infrastructure.

Manufacturing quality control systems use AI to inspect products at production line speeds. As items move through assembly processes, edge AI must analyze visual data, detect defects, and trigger corrective actions before faulty products advance. The reduced latency from fiber networks makes this continuous, real-time quality assurance feasible.

The Technical Foundation of Low-Latency AI

The relationship between network performance and AI capability is straightforward: faster data transmission enables faster inference cycles. When fiber networks minimize the time data spends in transit, AI models can complete more inference operations per second. This increased throughput allows edge systems to handle more complex models or process larger data volumes without sacrificing responsiveness.

Fiber's immunity to electromagnetic interference also contributes to consistent latency performance. Unlike copper cables that can experience signal degradation in electrically noisy environments, fiber maintains stable transmission speeds. For AI applications requiring predictable performance, this reliability is essential.

Building Responsive AI Ecosystems

Deploying fiber infrastructure creates the foundation for AI systems that can truly operate at the edge. The combination of minimal latency and high bandwidth capacity means multiple AI workloads can run simultaneously without competing for network resources. This scalability allows organizations to expand their edge AI deployments as needs grow.

The performance advantages of fiber become even more pronounced as AI models increase in complexity. Larger neural networks require more data movement between processing layers, and any network bottleneck compounds throughout the inference pipeline. Fiber networks eliminate these bottlenecks, ensuring that network performance never limits AI capability.

Sources

Maximizing Data Throughput for AI Model Performance

When deploying AI at the edge, bandwidth becomes the lifeblood of your operations. Modern AI workloads demand massive data throughput—both upstream and downstream—and fiber networks deliver the capacity needed to handle these simultaneous data flows without creating bottlenecks.

The Relationship Between Data Volume and Network Capacity

AI model performance directly correlates with how quickly data can move through your network infrastructure. As model complexity increases, so does the volume of data required for training, inference, and result distribution. Edge AI systems continuously collect sensor data, process it through sophisticated algorithms, and distribute actionable insights back to connected devices—all in real time.

Fiber networks provide the high-capacity foundation that makes this possible. With optical links capable of delivering speeds from 10 Gbps to 100 Gbps and beyond, fiber infrastructure ensures that data streams flow freely between edge nodes, aggregation points, and central processing facilities. This capacity becomes especially critical when multiple AI workloads run concurrently across your deployment.

Supporting Bidirectional AI Traffic

Edge AI operations generate traffic in both directions, and fiber excels at handling this bidirectional demand:

- Upstream traffic: Sensor data, telemetry, video feeds, and IoT device information flowing from edge locations to processing nodes

- Downstream traffic: Model updates, configuration changes, inference results, and control commands distributed back to edge devices

Traditional connectivity options struggle when upstream and downstream demands compete for limited bandwidth. Fiber networks eliminate this constraint, providing symmetrical or near-symmetrical capacity that prevents either direction from becoming a performance bottleneck.

Enabling Multiple Concurrent AI Workloads

One of fiber's most valuable characteristics is its ability to support simultaneous processing of multiple AI applications without degradation. Consider an edge deployment running:

- Real-time video analytics for security monitoring

- Predictive maintenance algorithms analyzing equipment sensors

- Natural language processing for customer interactions

- Computer vision systems for quality control

Each workload generates its own data streams and processing requirements. Fiber's high bandwidth capacity ensures that all these applications receive the network resources they need, maintaining optimal AI performance across your entire deployment.

Scalability for Growing AI Deployments

As your AI initiatives expand, network capacity must scale accordingly. Fiber infrastructure provides built-in scalability advantages that protect your investment. When you need more bandwidth, upgrading often requires only endpoint equipment changes rather than replacing the entire physical infrastructure.

This scalability becomes critical as AI models grow more sophisticated and edge deployments expand to additional locations. The network capacity that supports today's workloads can adapt to tomorrow's demands, ensuring consistent AI performance as your operations evolve.

Sources

Ensuring Continuous AI Operations with Fiber Reliability

When AI systems power mission-critical operations—whether in manufacturing, healthcare, or autonomous vehicles—network downtime isn't just inconvenient; it's catastrophic. A single interruption can halt production lines, delay medical diagnoses, or compromise safety systems. This is where fiber optic networks demonstrate their true value, delivering the reliability that edge AI deployments demand.

Fiber's physical properties create inherently stable connections. Unlike copper-based systems vulnerable to electromagnetic interference, temperature fluctuations, and signal degradation, fiber transmits data via light through glass strands. This design eliminates many common failure points that plague traditional networks. The result? Fiber networks experience roughly 70% fewer service interruptions than cable networks over a year, a difference that directly translates to more dependable AI operations.

The Real Cost of AI System Downtime

For edge AI applications, even brief network outages create cascading problems. Real-time analytics stop flowing, predictive models lose access to fresh data, and automated decision-making grinds to a halt. In industrial settings, this can mean missed quality control checks or safety alerts. In retail environments, it disrupts inventory management and customer experience optimization.

The impact extends beyond immediate operational disruptions. AI models that depend on continuous data streams may require recalibration after connectivity gaps, adding recovery time to the initial downtime. Mission-critical applications simply cannot afford these interruptions, making network reliability a non-negotiable requirement rather than a nice-to-have feature.

Building High-Availability Architectures

Fiber infrastructure naturally supports the redundancy strategies essential for continuous AI operations. High-availability architectures typically employ dual fiber paths between edge nodes and central processing hubs, ensuring that if one connection fails, traffic automatically reroutes through the backup path. This failover mechanism happens in milliseconds—fast enough that AI workloads continue without noticeable disruption.

Redundancy extends beyond simple backup connections. Sophisticated fiber deployments incorporate diverse physical routing, where primary and secondary fiber paths follow different geographic routes. This protects against localized damage from construction accidents, natural disasters, or equipment failures. For distributed edge AI networks spanning multiple locations, this geographic diversity becomes critical to maintaining system-wide reliability.

Fiber's Advantage in Harsh Environments

Edge AI deployments often operate in challenging conditions—factory floors with heavy machinery, outdoor installations exposed to weather, or remote locations with limited infrastructure support. Fiber's resistance to environmental factors gives it a significant edge over copper alternatives. It doesn't corrode, isn't affected by moisture in the same way, and maintains signal integrity across longer distances without requiring intermediate amplification that introduces additional failure points.

This durability translates directly to lower maintenance requirements and fewer unexpected outages. For AI systems monitoring critical infrastructure or managing automated processes, this reliability ensures consistent performance without the constant attention that less robust network technologies demand.

Monitoring and Proactive Maintenance

Modern fiber networks support sophisticated monitoring capabilities that enable proactive maintenance before issues impact AI operations. Network administrators can detect subtle signal degradation, identify potential failure points, and schedule maintenance during planned downtime windows. This predictive approach to network management aligns perfectly with the proactive nature of AI systems themselves.

When AI workloads depend on continuous connectivity, the combination of fiber's inherent reliability and intelligent monitoring creates a robust foundation. Systems can operate with confidence that network infrastructure won't become the weak link in otherwise sophisticated AI deployments.

Sources

Connecting Distributed Edge AI Nodes Effectively

Distributed edge AI architectures represent the next evolution in intelligent infrastructure. Rather than relying on a single centralized point of processing, these systems deploy multiple edge nodes across different locations that work together as a coordinated network. Fiber connectivity forms the essential backbone that makes this collaboration possible.

The challenge with distributed AI systems is maintaining seamless communication between nodes while ensuring each location can process data locally when needed. Fiber networks excel at this dual requirement by providing both the bandwidth for large-scale data synchronization and the low latency necessary for real-time coordination.

Mesh Network Topologies for Edge AI

Mesh network architectures allow edge AI nodes to communicate directly with each other, not just through a central hub. This approach creates multiple pathways for data to travel, improving resilience and reducing bottlenecks. When one node processes information that's relevant to neighboring nodes, fiber connections enable rapid sharing without routing everything through a central data center.

Fiber's high capacity makes mesh topologies practical even when handling the substantial data volumes generated by AI workloads. Each node can maintain multiple fiber connections to its neighbors, creating redundant pathways that enhance both performance and reliability.

Enabling Federated Learning Across Sites

Federated learning represents a powerful approach where AI models are trained across multiple distributed nodes without centralizing raw data. Each edge location trains on its local dataset, then shares only model updates rather than the underlying information. This approach preserves privacy while still allowing collaborative improvement of AI models.

Fiber networks facilitate this process by providing the bandwidth needed to synchronize model parameters across distributed sites. The low latency ensures that updates can be shared quickly, allowing the federated learning process to proceed efficiently without long delays between training rounds.

Hybrid Edge-Cloud Coordination

Many deployments adopt hybrid architectures that combine edge processing with cloud resources. Edge nodes handle time-sensitive inference locally, while more complex training and model optimization occur in centralized cloud environments. Fiber connectivity bridges these two tiers, enabling seamless coordination.

This hybrid approach allows organizations to optimize resource allocation. Routine AI tasks run at the edge for speed, while computationally intensive operations leverage cloud infrastructure. Fiber networks provide the high-throughput connections necessary to move models, updates, and aggregated data between edge and cloud efficiently.

Load Balancing Across Distributed Infrastructure

Distributed edge AI systems benefit from intelligent load balancing that directs workloads to the most appropriate node based on current capacity and proximity to data sources. Fiber networks support this dynamic allocation by providing the low-latency connections needed for real-time coordination between nodes.

When one edge location experiences high demand, fiber connectivity allows workloads to be shifted to neighboring nodes with available capacity. This flexibility maximizes overall system utilization and prevents individual nodes from becoming overwhelmed.

Multi-Site Deployment Coordination

Deploying AI across multiple sites requires careful coordination of model versions, configuration parameters, and operational policies. Fiber networks enable centralized management systems to push updates to distributed nodes quickly and reliably. This ensures consistency across the entire deployment while allowing for site-specific customization when needed.

Synchronization becomes particularly important when AI models are updated. Fiber's high bandwidth allows new model versions to be distributed to all edge nodes rapidly, minimizing the time when different locations operate with inconsistent AI capabilities. This coordination is essential for maintaining uniform performance and decision-making across distributed operations.

Sources

AI Performance Optimization Through Strategic Network Design

Optimizing fiber networks for AI workloads requires a strategic approach that balances performance, reliability, and security. As AI applications become more demanding, network design must evolve to support real-time processing, massive data flows, and distributed computing architectures. The right configuration can mean the difference between AI systems that deliver instant insights and those that struggle with bottlenecks.

Quality of Service Configuration for AI Workloads

Quality of Service (QoS) configuration ensures that AI traffic receives the priority it needs to maintain consistent performance. By classifying and prioritizing AI-related data packets, you can guarantee that critical workloads aren't delayed by less important network traffic. This becomes especially important when multiple applications share the same fiber infrastructure.

Implementing traffic prioritization involves identifying AI data streams and assigning them higher priority levels. Real-time inference traffic, for example, typically requires lower latency than model training data transfers. Configure your network equipment to recognize these different traffic types and route them accordingly, ensuring that time-sensitive AI operations always get the bandwidth they need.

Network Segmentation and Bandwidth Allocation

Network segmentation creates isolated pathways for different types of AI traffic, reducing congestion and improving security. By dividing your fiber network into logical segments, you can allocate dedicated bandwidth to specific AI workloads while preventing interference from other network activities. This approach also limits the potential impact of security incidents or performance issues in one segment from affecting others.

Bandwidth allocation should align with the specific requirements of your AI applications. Edge AI systems processing video streams will have different needs than those handling sensor data or running batch analytics. Monitor actual usage patterns and adjust allocations dynamically to ensure optimal resource utilization as workloads evolve.

Monitoring Tools and Performance Metrics

Effective monitoring is essential for maintaining optimal AI performance across fiber networks. Track key metrics including latency, packet loss, jitter, and throughput to identify potential issues before they impact AI operations. Modern network monitoring tools can provide real-time visibility into how your fiber infrastructure is supporting AI workloads.

Focus on metrics that directly correlate with AI performance. Monitor inference response times, data transfer rates between edge nodes and cloud resources, and network utilization during peak processing periods. Establish baseline performance levels and set alerts for deviations that could indicate capacity constraints or configuration problems.

Capacity Planning for Scaling AI Workloads

As AI deployments grow, capacity planning becomes critical to maintaining performance. Investing in high-speed fiber networks is essential to meet growing bandwidth needs, as fiber networks deliver the low-latency, high-capacity performance required for AI data workloads. Plan for both gradual growth and sudden spikes in demand that can occur when new AI models are deployed or existing ones are updated.

Build headroom into your fiber network design to accommodate future expansion. Consider the trajectory of your AI initiatives and how data volumes, model complexity, and the number of edge nodes might increase over time. Regular capacity assessments help you stay ahead of demand and avoid costly emergency upgrades.

Balancing Security and Performance

Security measures are non-negotiable for AI systems, but they shouldn't compromise network performance. Implement security controls that protect AI data and infrastructure without introducing excessive latency or reducing throughput. Encryption, for example, can be optimized by using hardware acceleration to minimize processing overhead.

Design your security architecture to work with your network segmentation strategy. Isolate AI workloads in secure network zones while ensuring that security inspection and filtering processes don't create bottlenecks. Regular security audits should verify that protective measures remain effective without degrading the performance your AI applications depend on.

Sources

Practical Deployment Considerations for Fiber-Enabled Edge AI

Deploying fiber networks for edge AI isn't just about ordering connectivity—it's about strategic planning that aligns infrastructure with performance goals. The implementation process requires careful assessment of current capabilities, understanding installation options, and anticipating challenges before they impact your AI operations.

Assessing Your Current Infrastructure

Start by evaluating your existing network architecture. Document current bandwidth utilization, latency measurements, and reliability metrics. Identify where edge AI nodes will be deployed and calculate the distance from your nearest fiber access points. This assessment reveals gaps between what you have and what your AI workloads demand.

Consider your physical infrastructure constraints early. Building access, conduit availability, and rights-of-way can significantly impact installation timelines. Work with facility managers to understand any restrictions on cable routing or equipment placement.

Choosing Between Dedicated and Shared Fiber

Dedicated fiber connections provide guaranteed bandwidth and performance, making them ideal for mission-critical AI applications where consistent low latency is non-negotiable. You control the entire fiber path, which simplifies troubleshooting and eliminates concerns about neighbor traffic affecting your AI performance.

Shared fiber options offer cost advantages, particularly for organizations with multiple locations or those testing edge AI deployments before full-scale rollout. Shared infrastructure can still deliver excellent performance when properly configured with quality of service guarantees from your provider.

Colocation Strategies for Edge AI

Colocation facilities offer a middle ground that combines fiber connectivity with physical infrastructure. By placing edge AI nodes in strategically located data centers, you gain access to carrier-neutral fiber networks while avoiding the complexity of managing your own facility.

Evaluate colocation providers based on their fiber carrier options, power density capabilities for AI hardware, and proximity to your data sources. The best colocation strategy positions your edge nodes close to where data originates while maintaining high-speed fiber links back to central processing facilities.

Integration with Existing Networks

New fiber connections must seamlessly integrate with your current network topology. Plan for compatibility between legacy systems and new fiber infrastructure. This often requires edge routers or switches that can handle both traditional connectivity and high-speed fiber interfaces.

Network segmentation becomes critical when integrating fiber-enabled edge AI. Separate AI traffic from general business operations to prevent resource contention and maintain security boundaries. Use VLANs or dedicated fiber paths to isolate AI workloads while allowing controlled data exchange.

Cost Considerations and ROI Calculations

Fiber deployment involves upfront installation costs, monthly connectivity fees, and equipment expenses. Installation costs vary widely based on distance, terrain, and whether existing conduit is available. Monthly fees depend on bandwidth commitments and service level agreements.

Calculate ROI by quantifying the business value of improved AI performance. Faster inference times, reduced latency in decision-making, and higher model accuracy all translate to measurable outcomes. Compare these benefits against the total cost of ownership over a three-to-five-year period.

Don't overlook operational savings. Fiber networks typically require less maintenance than traditional connectivity and offer better uptime, reducing costs associated with network troubleshooting and AI system downtime.

Timeline Expectations

Fiber installation timelines range from weeks to months depending on complexity. Simple installations leveraging existing infrastructure might complete in 30-60 days. New fiber runs requiring permits, trenching, or complex routing can extend to 90-180 days or longer.

Build buffer time into your project plan for permitting delays, weather impacts, and coordination with multiple stakeholders. Start the fiber procurement process early in your edge AI planning to avoid having hardware ready before connectivity is available.

Addressing Physical Installation Challenges

Physical constraints often present the biggest deployment hurdles. Urban environments may require navigating complex permitting processes and coordinating with municipal authorities. Rural deployments face distance challenges and limited existing infrastructure.

Work closely with connectivity providers who have experience in your deployment geography. They can navigate local regulations, identify the most efficient routing paths, and leverage existing infrastructure to minimize installation complexity.

Building entry points require careful planning. Ensure adequate space for fiber termination equipment and maintain proper bend radius requirements for fiber cables. Poor cable management during installation can degrade performance or create future maintenance headaches.

Coordinating with Connectivity Providers

Successful fiber deployment requires close partnership with your connectivity provider. Clearly communicate your AI performance requirements, including specific latency targets, bandwidth needs, and uptime expectations. Providers can then design solutions that meet these requirements.

Establish clear service level agreements that include performance guarantees, response times for issues, and escalation procedures. For AI workloads, standard business SLAs may not be sufficient—negotiate terms that reflect the critical nature of your applications.

Plan for regular performance reviews with your provider. As your AI workloads evolve, bandwidth and latency requirements may change. Proactive communication ensures your fiber infrastructure scales alongside your AI initiatives.

Sources

Conclusion

Fiber networks aren't just an upgrade for edge AI systems—they're the foundation that makes high-performance AI operations possible. Throughout this guide, we've explored how fiber connectivity delivers the three critical pillars of AI performance: dramatically reduced latency, massive bandwidth capacity, and rock-solid reliability. These aren't incremental improvements; they're transformative capabilities that separate functional AI deployments from truly exceptional ones.

The performance benefits speak for themselves. By minimizing latency, fiber networks enable AI models to process and respond to data in near real-time, which is essential for applications where milliseconds matter. The bandwidth capacity ensures that even the most data-intensive AI workloads can operate without bottlenecks, while the inherent reliability of fiber infrastructure keeps AI operations running continuously without disruption.

Real-World Results

A few years back, I worked with a client who was struggling with latency issues in their edge AI applications. They were utilizing real-time data to drive decision-making processes, but the sluggish response times were a significant bottleneck. By deploying a robust fiber network, the performance of their AI systems improved dramatically, allowing them to process information in near real-time. This experience reinforced what I've seen time and again: fiber connectivity creates a seamless flow of data that optimizes AI performance across the board.

Taking Action on Your AI Infrastructure

If you're running or planning edge AI initiatives, now is the time to assess your current network infrastructure. Ask yourself whether your connectivity can truly support the demands of modern AI workloads. Consider fiber not as a luxury, but as an essential investment that directly impacts your AI capabilities and competitive position.

The deployment considerations we've covered—from infrastructure assessment to colocation strategies—provide a roadmap for making fiber-enabled edge AI a reality. While the initial planning and implementation require careful coordination, the performance gains and operational advantages far outweigh the effort.

As we continue to push the boundaries of what's achievable with AI, the importance of network infrastructure can't be overstated. Fiber networks will undoubtedly continue to enhance AI capabilities, driving innovation and efficiency across industries. The organizations that recognize fiber as foundational to their AI strategy today will be the ones leading tomorrow's AI-powered transformation.